The Abstraction That Changed Everything: Martin Fowler on Why AI Is Different

I wanted to understand AI's impact on software engineering. I found something more fundamental.

Martin Fowler’s conversation with Gergely Orosz on The Pragmatic Engineer stopped me mid-listen. Not because of hype or prediction, but because of clarity.

Martin has spent decades shaping how engineers think about architecture. His articles are the ones we bookmark, reference in design reviews, return to when we need grounding.

So when he calls AI “the biggest shift I’ve seen in programming during my career, comparable only to the shift to high-level languages,” I listen differently.

This isn’t trend-chasing. This is pattern recognition at the deepest level.

The Shift We Didn’t Notice

Here’s what hit me:

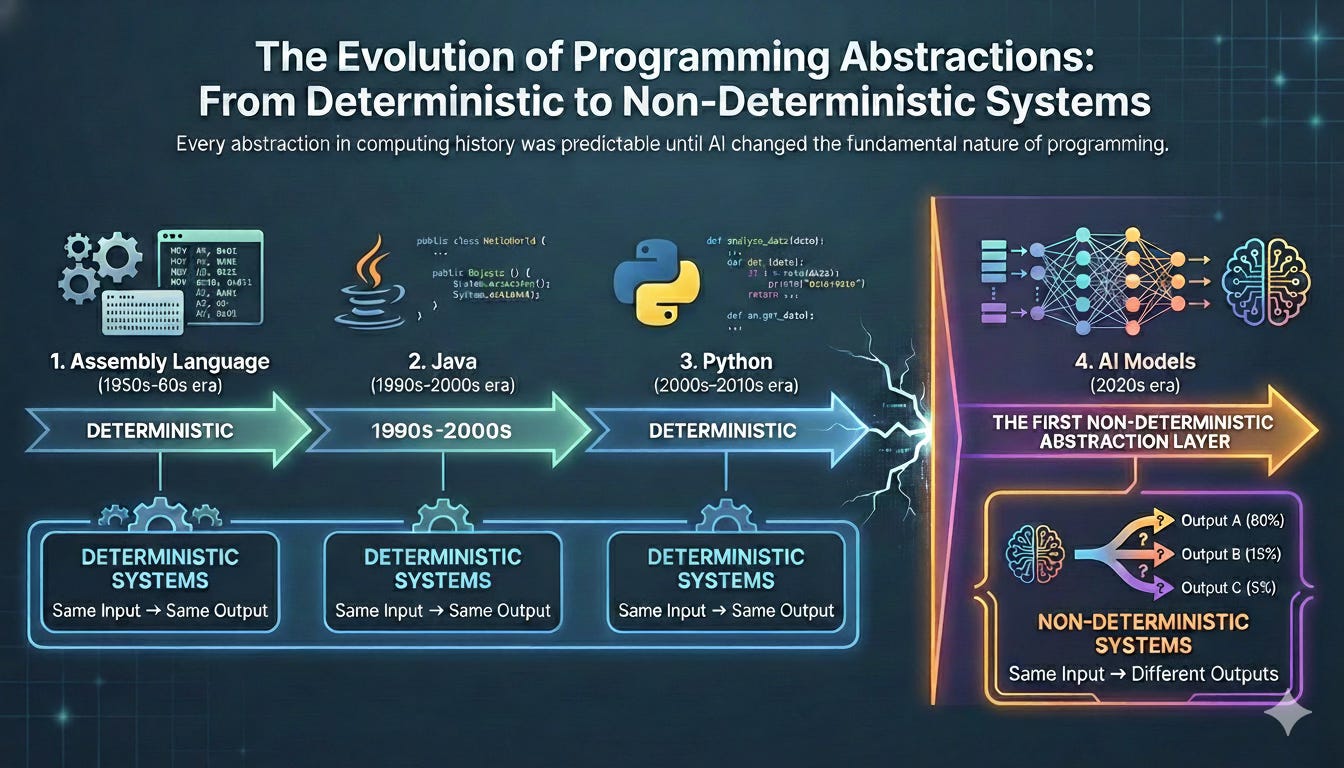

Think about every abstraction layer in computing history. Assembly language → C → Java → Python. Each step moved us further from the machine, made things simpler, more expressive.

But here’s what stayed constant through every single abstraction:

Determinism.

Run the same code with the same inputs? You get the same output. Every time. Predictably. Reliably.

That’s been true for the entire history of programming.

Until now.

AI Broke the Pattern

AI models are the first abstraction layer that’s fundamentally non-deterministic.

Same prompt. Different outputs.

The creativity that makes AI powerful — the ability to understand context, generate novel solutions, work with ambiguity — is inseparable from its unpredictability.

We didn’t just add a tool. We changed the nature of what programming means.

The Bridge Builder’s Intuition

Martin shared something his wife said. She’s a structural engineer.

She doesn’t build to exactly what the math requires. She builds with tolerances. Safety margins. Because materials vary, conditions change, and if you cut too close to the edge, bridges collapse.

That’s the question we’re facing now:

What are the tolerances for non-determinism in software?

How much unpredictability can we handle before systems fail?

Where do we need determinism, and where can we embrace creative uncertainty?

The Elegant Paradox

Here’s what’s fascinating: the entire AI engineering movement is trying to make non-determinism deterministic again.

We’re building:

Detailed context and constraints to narrow outputs

Validation schemas to verify results

Rigorous testing (Martin emphasized this repeatedly)

Hybrid systems that combine AI with deterministic tools

We want the creative spark. The unexpected insight. The ability to understand complex legacy systems in ways we never could.

But we also need reliability.

So we’re wrapping guardrails around creativity.

What Endures

The skills that make a great engineer haven’t changed.

Understanding architecture. Writing tests. Refactoring code. Thinking systematically.

These matter more now, not less.

Because someone needs to validate what AI produces. Someone needs to know when the output is brilliant and when it’s plausible-sounding nonsense.

Think of AI as a brilliant junior engineer. Incredibly fast. Sometimes insightful. Often needs code review.

You don’t ignore their contributions. But you don’t ship without verification either.

The Industry Moment

Martin also described something striking: traditional software is in a “depression” — lack of business investment, stagnant hiring — while AI experiences a bubble.

He said: “You never know how big bubbles grow, when they pop, or what comes after.”

It’s disorienting. Two opposite forces at once.

My Experience

I’ve spent years building systems where unpredictability isn’t acceptable. In enterprise applications, “sometimes it gives different results” isn’t creative — it’s a defect.

Yet I’m also exploring AI actively. Running experiments. Learning what works.

The tension Martin articulated is exactly what I feel.

The path forward isn’t choosing one or the other. It’s thinking like that structural engineer:

Use AI where its strengths shine — understanding messy codebases, generating initial implementations, exploring solution spaces.

But verify rigorously. Test thoroughly. Build in safety margins.

The Deeper Pattern

We’re not just adopting a new tool.

We’re redefining what abstraction means in programming.

The patterns we figure out now — how to work with systems that are powerful but unpredictable — will shape how the next generation writes software.

Classical programming was binary: deterministic OR non-deterministic.

Maybe we’re entering something different: both, in superposition, with tolerances that let us navigate between them.

The Shift in Perspective

I started listening for predictions about AI’s future.

I ended understanding something about programming’s fundamental nature.

Some things endure: principles, core skills, systematic thinking.

Some things evolve: the tools we use, the abstractions we build, the tolerances we set.

Martin’s insight isn’t about what’s coming. It’s about what already changed.

We’re the first generation of engineers working with abstractions that don’t promise predictability.

That’s not a bug. It’s the new design space.

🎧 Listen: The Pragmatic Engineer - Martin Fowler on AI

What’s your take? Are you thinking about tolerances in your AI work, or does determinism still feel non-negotiable? Drop a comment — I’m curious what patterns you’re seeing.